In Part #1 & 2 of this Ten Part series discussing the OWASP Top 10, Injection and Broken Authentication were discussed.

To give context to Sensitive Data Exposure, these two risks introduce tactics actively performed by the threat actor:

- Injection refers to the input to a web page from a source that does not follow the ‘intended’ rules of what is expected, and that entry is made for the purpose of performing a command against a system.

- Broken Authentication occurs when an attacker uses impersonation tactics for their goal of attaining someone’s credentials for a website, or the browsing session a previous user had but did not close out properly.

In this blog covering Sensitive Data Exposure, the threat type changes from what attacks are performed, to a threat that can be avoided. Leaving sensitive, unencrypted data exposed to the internet is a threat that can be avoided, and the humans in charge of the personal data should ensure data protection is in place to avoid this risk. This is a proactive process, as technologies and threat TTPs change over time.

What Is Sensitive Data Exposure?

Sensitive Data Exposure is the unintended visibility of information which should have been kept private.

One of the major reasons beyond public embarrassment for having private data exposed is there are very real costs involved with breaches of private data; when personally identifiable data (PII) or patient health information (PHI) is exposed or stolen, regulatory agencies, like the OCR for HIPAA, can issue steep fines in accordance with breach reporting statutes. Another example is the GDPR (General Data Protection Regulation), which is a part of the EU’s data protection laws. The fines under GDPR for non-compliance are extensive, as defined here: https://gdpr.eu/fines/

When people think about data exposure, they typically think of the following types:

- Credit Card Information

- Health Records

- Personal Information (Driver License, voting details, etc.)

- Classified Records of Value (patents, military records or financial records of companies, people, or other entities)

However, there are more types of easy-to-hack data that are of concern. Others include, but are not limited to: exclusive news stories or articles, legal proceedings, criminal evidence, school records, research data, and website credentials. In reality, any information which an entity feels is important enough to keep secret should have controls in place to avoid, or at least minimize, the risk of data exposure. The unfortunate part is, this is easier said than done.

Protecting Data at Rest and in Transit

Data has two different times when it is exposed: “Data at Rest” and “Data in Transit”. These mean exactly what they sound like. When data is at rest, it is stored, and when data is in transit, it is being moved in some manner either physically or electronically. At both times they are susceptible to multiple forms of attack.

During a period when data is at rest, one can retrieve, view, delete, or use the data. Also, there should be policies in place to define the access controls for who, when, and how the data can be manipulated. The following protection recommendations pertain to web-based concerns. Physical and electronic access controls require additional security measures specific to offline environments that exceed the scope of the threats covered in the OWASP Top 10.

Implement Access Controls

Access Control is based on three security concepts:

- Permissions: Are you allowed to perform the activity of create, read, write, or delete to the item

- Rights: The ability to act on an item (one is allowed to make a change)

- Privileges: A combination of permissions and rights

The permissions, rights, and privileges are handled by an Access Control Table / Matrix, where the subjects (people), the objects (files as an example) and the privileges are defined. There are different models for handling access control: Discretionary (DAC), Role Based (RBAC), Rule-based, Attribute Based (ABAC) and Mandatory (MAC).

| Access Control Name | Restrictions | Description / Example |

| Direct Access Control (DAC) | Each object has an owner and the owner defines the access of others. | Microsoft Windows NTFS |

| Role Based Access Control (RBAC) | Roles and groups are used with the administrator defining the privileges for the roles. Typically, a role is defined by job function. | Microsoft Windows Group Policy |

| Rule-based Access Control | Rules are placed against all subjects | Firewall rules |

| Attribute Based Access Control (ABAC) | Rules with multiple attributes and definitions | Software-defined networks |

| Mandatory Access Control (MAC) | Uses labels against the objects and subjects | Military folder labels:

Top Secret, Secret, Confidential |

Sample Access Control Table / Matrix with subjects (people), objects (i.e., files), and privileges defined

Establish Data Encryption

Beyond just the access control aspects of data, encryption is also important so that even if data is attained, it is worthless to the attacker without the proper key pair. By a key pair, we are referring to two data sets which control the ability to manipulate the data in question.

In looking at data at rest attacks, the key concern is encryption. And this raises a few questions which need to be answered. Is the data kept in clear text, where if it is attained, it is as easy as reading regular information? If it is encrypted, are weak keys being used? Is proper key management taking place? Keys are based on algorithms; the stronger the algorithm, the harder it is to break and then be able to manipulate the data object in question. Key management pertains to how keys are created, provided to users, kept, removed / deleted, recovered, and escrowed. If there is a desire to learn more about encryption or keys in general, examining cryptography and symmetric / asymmetric keys would be a good start.

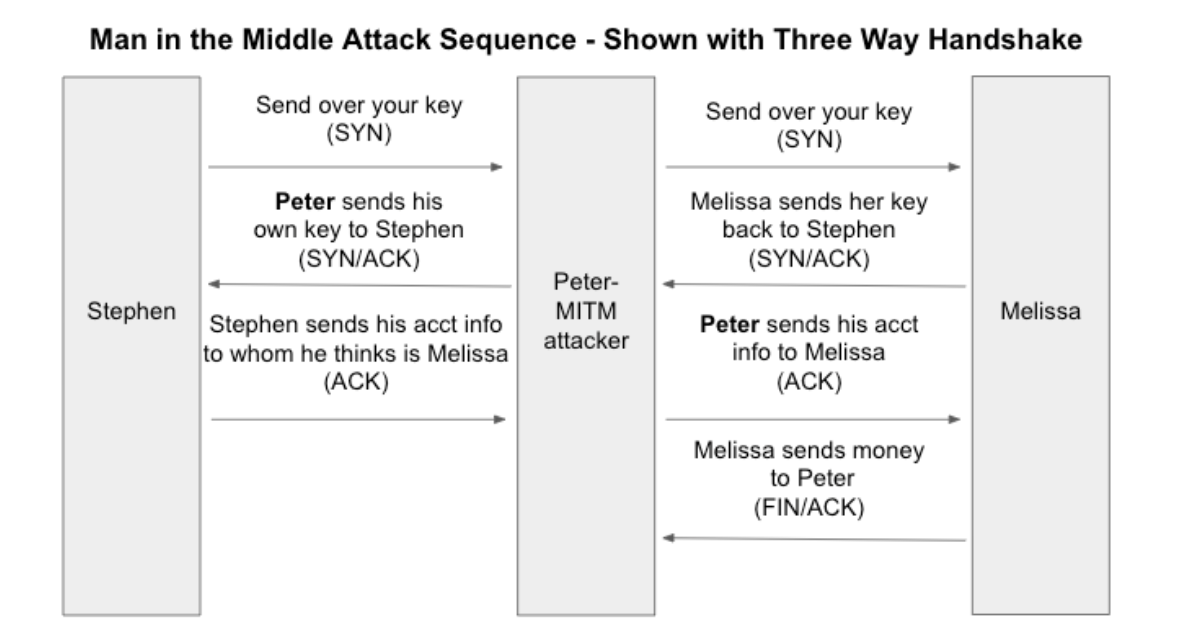

Once the data is no longer at rest, and in transit, the data must be encrypted via TLS. For those familiar with the OSI model, this occurs within the Transport Layer (Layer 4). If the transmission is intercepted in a MITM attack (Man in the Middle) or via Wireshark, the data is worthless without the key, just like it was discussed when ‘at rest’ above.

With Data Encryption, a MITM (Man In The Middle) attack is worthless without an encryption key.

Implement a Data Handling Policy

Encryption and Access Controls only go so far in preventing exposure of sensitive data. There are other routes to which web applications are vulnerable to expose data to the public and threat actors.

Web applications are vulnerable from a multitude of additional perspectives:

- The source code has hard coded information: keys, passwords, tokens

- The user agent is not examining the server certificate

- Caching data which should not

- Creating logs which contain sensitive data

Additionally, some of the concerns regarding web-based attacks pertaining to data transmission are the APIs which permit applications to communicate by sending data in transit to each other.

Examining each of the concerns above, one can see why establishing a data handling policy is so important.

Strengthen your Data Security with Deepwatch

When examining the data handling, transmission, and storing needs of an organization, and further how to prevent, triage, and examine issues which can erupt from mishandling of data, contact Deepwatch. Our staff of highly trained analysts, detection engineers, threat hunters, firewall engineers, and other experts are available to solidify a plan of action to augment your security posture and practice. They are experts at identifying concerns surrounding a multitude of attack frameworks, configuration issues which lead to vulnerabilities, and understanding the pivoting needed to examine various logs in triaging events.

In doing so, Deepwatch helps you to manage your organization’s IT budget by shifting the heavy lifting of triaging and examining security events to us. If we find events to escalate to your organization, we will provide guidance on incident response and be available 24/7/365 to assist in your investigations.

Interested to learn more? Read about Deepwatch Managed Detection and Response services or contact us to speak with an MDR expert.

↑

Share